AI-powered video generation is a hot market on the back of OpenAI’s releasing the Sora model last month. Two DeepMind alums, Yishu Miao and Ziyu Wang, have publicly released their video-generation tool Haiper with its own AI model underneath.

Miao, who was previously working at TikTok in the Global Trust & Safety team, and Wang, who has worked as a research scientist for both DeepMind and Google, started working on the company in 2021 and formally incorporated it in 2022.

The pair has expertise in machine learning and started working on the problem of 3D reconstruction using neural networks. After training on video data, Miao mentioned to TechCrunch on a call that they found out that video generation was a more fascinating problem than 3D reconstruction. That’s why Haiper ended up focusing on video generation roughly six months ago.

Haiper has raised $13.8 million in a seed round led by Octopus Ventures with participation from 5Y Capital. Before that, angels like Phil Blunsom and Nando de Freitas helped the company raise a $5.4 million pre-seed round in April 2022.

Video-generation service

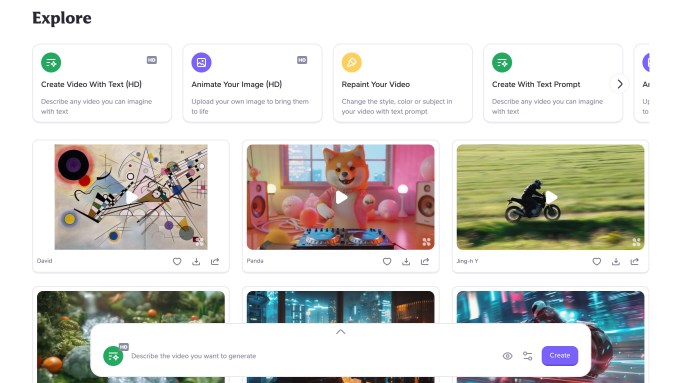

Users can go to Haiper’s site and start generating videos for free by typing in text prompts. However, there are certain limitations. You can only generate a two-second HD video and slightly lower-quality video of up to four seconds.

The site also has features like animating your image and repainting your video in a different style. Plus, the company is working to introduce capabilities like the ability to extend a video.

Miao said that the company aims to keep these features free in order to build a community. He noted that it is “too early” in the startup’s journey to think about building a subscription product around video generation. However, it has collaborated with companies like JD.com to explore commercial use cases.

We used one of the original Sora prompts to generate a sample video: “Several giant wooly mammoths approach treading through a snowy meadow, their long wooly fur lightly blows in the wind as they walk, snow-covered trees and dramatic snow-capped mountains in the distance, mid-afternoon light with wispy clouds and a sun high in the distance creates a warm glow, the low camera view is stunning capturing the large furry mammal with beautiful photography, depth of field.”

Building a core video model

While Haiper is currently focusing on its consumer-facing website, it wants to build a core video-generation model that could be offered to others. The company hasn’t made public any details about the model.

Miao said that it has privately reached out to a bunch of developers to try its closed API. He expects that developer feedback is very important with the company iterating on the model rapidly. Haiper has also thought about open sourcing its models down the line to let people explore different use cases.

The CEO believes that currently, it’s important to solve the uncanny valley problem — a phenomenon that evokes eerie feelings when people see AI-generated human-like figures — in video generation.

“We are not working in solving problems in content and style area, but we are trying work on fundamental issues like how AI-generated humans look while walking or snow falling,” he said.

The company currently has around 20 employees and is actively hiring for multiple roles across engineering and marketing.

Competition ahead

OpenAI’s recently released Sora is probably the most popular competitor for Haiper at the moment. However, there are other players like Google and Nvidia-backed Runway, which has raised more than $230 million in funding. Google and Meta also have their own video-generation models. Last year, Stability AI announced Stable Diffusion Video model in research preview.

Rebecca Hunt, a partner at Octopus Ventures, believes that in the next three years, Haiper will have to build a strong video-generation model to achieve differentiation in this market.

“There are realistically only a handful of people positioned to achieve this; this is one of the reasons we wanted to back the Haiper team. Once the models get to a point that transcends the uncanny valley and reflects the real world and all its physics there will be a period where the applications are infinite,” she told TechCrunch over email.

While investors are looking to invest in AI-powered video-generation startups, they also think the technology still has a lot of room for improvement.

“It feels like AI video is at GPT-2 level. We’ve made big strides in the last year, but there’s still a way to go before everyday consumers are using these products on a daily basis. When will the ‘ChatGPT moment’ arrive for video?” a16z’s Justine Moore wrote last year.

The article previously stated Geoffrey Hinton as an angel investor. While Hinton has worked with the startup’s founders prior to the company’s inception, is not involved as an investor.